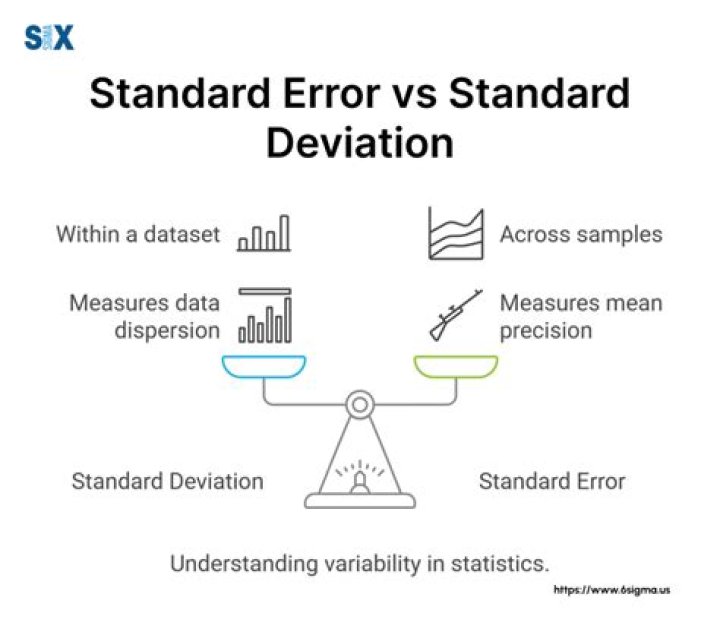

Standard deviation and standard error are two important concepts in statistics that are often misunderstood or confused with one another. Knowing the difference between these two terms can help you better understand the data that you are working with. In this article, we will explore the differences between standard deviation and standard error and provide examples of each concept.

What is Standard Deviation?

Standard deviation is a measure of the amount of variation in a data set. It is calculated by taking the square root of the variance and is expressed as a single number. The standard deviation is used to measure the amount of variation from the mean in a data set. It can be used to compare different data sets to one another and to assess how much the data points vary from the mean.

What is Standard Error?

Standard error is a measure of the amount of error in a data set. It is calculated by taking the square root of the mean squared error and is expressed as a single number. The standard error is used to measure how far a data point is from its expected value. It can be used to compare different data sets to one another and to assess how much the data points vary from the expected value.

Difference between Standard Deviation and Standard Error

The main difference between standard deviation and standard error is that standard deviation is a measure of the amount of variation in a data set, while standard error is a measure of the amount of error in a data set. Standard deviation measures the amount of variation from the mean in a data set, while standard error measures how far a data point is from its expected value.

How to Calculate Standard Deviation

Standard deviation is calculated by taking the square root of the variance. The variance is calculated by taking the sum of the squared differences between each data point and the mean, divided by the number of data points in the set. The formula for standard deviation is:

SD = √ ( Σ (x-μ)2/N )

How to Calculate Standard Error

Standard error is calculated by taking the square root of the mean squared error. The mean squared error is calculated by taking the sum of the squared differences between each data point and its expected value, divided by the number of data points in the set. The formula for standard error is:

SE = √ ( Σ (x-μ)2/N )

Example of Standard Deviation

Suppose you have a data set with five data points: 5, 6, 7, 8, and 9. The mean of the data set is 7. The variance of the data set is 4. The standard deviation of the data set is therefore 2 (√4 = 2). This means that the data points in the set vary by an average of two units from the mean.

Example of Standard Error

Suppose you have a data set with five data points: 5, 6, 7, 8, and 9. The expected value of the data set is 8. The mean squared error of the data set is 1. The standard error of the data set is therefore 1 (√1 = 1). This means that the data points in the set vary by an average of one unit from the expected value.

Uses of Standard Deviation and Standard Error

Standard deviation and standard error are both used to measure the amount of variation or error in a data set. Standard deviation is used to measure the amount of variation from the mean in a data set, while standard error is used to measure how far a data point is from its expected value. Standard deviation and standard error are used in a variety of applications, including hypothesis testing, estimating confidence intervals, and comparing different data sets.

Limitations of Standard Deviation and Standard Error

Standard deviation and standard error are not perfect measures of variation or error in a data set. Standard deviation and standard error do not account for outliers or extreme values, which can significantly affect the results of a data set. Additionally, standard deviation and standard error are not always the best measures of variation or error in a data set, as other measures of variability or error may be more appropriate depending on the data set.

Advantages of Standard Deviation and Standard Error

Standard deviation and standard error are easy to calculate and interpret. They are relatively simple measures of variation or error that can be used to compare different data sets or to assess how much the data points vary from the mean or expected value. Standard deviation and standard error are also widely used in a variety of applications, making them a useful tool for data analysis.

Conclusion

Standard deviation and standard error are two important concepts in statistics that are often confused or misunderstood. Knowing the difference between these two terms can help you better understand the data that you are working with. Standard deviation is a measure of the amount of variation in a data set, while standard error is a measure of the amount of error in a data set. Standard deviation and standard error are both used to measure the amount of variation or error in a data set and can be used to compare different data sets or to assess how much the data points vary from the mean or expected value.